Cognitive semantics is a linguistic approach that understands the meaning of words in relation to human cognition and ways of perceiving things. In this article, I would like to talk about the “cognition” of LLMs from the perspective of cognitive semantics.

In the background of cognitive semantics are fields such as Gestalt psychology and cognitive psychology. You have probably seen optical illusions like the following.

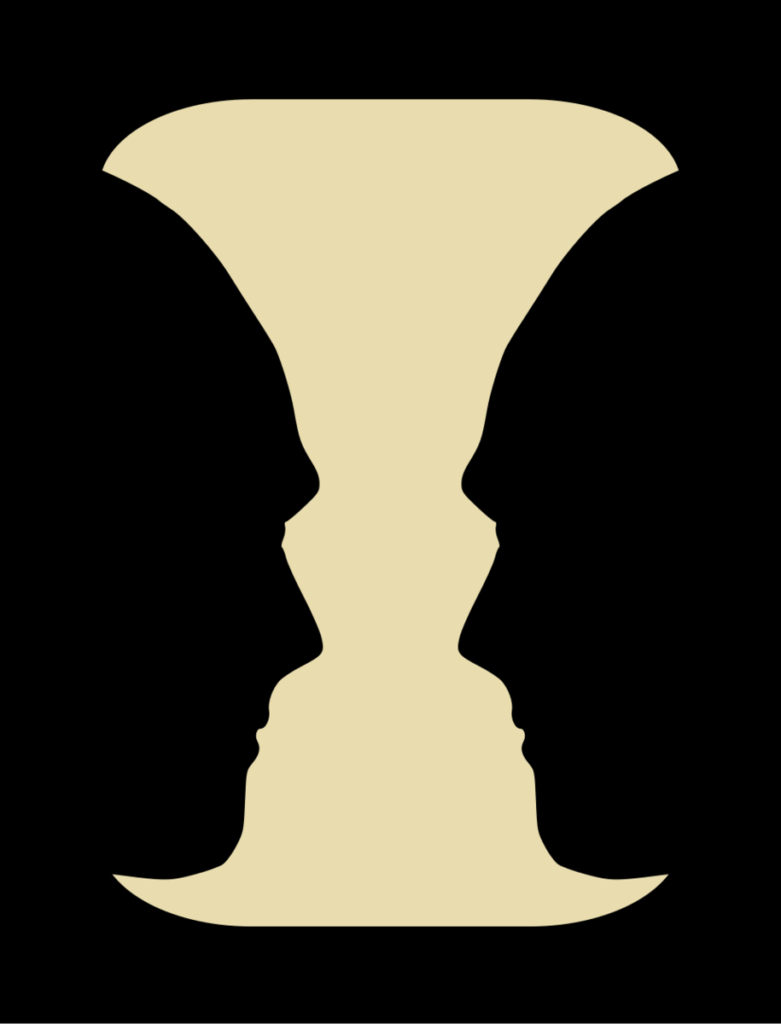

This is Rubin’s vase, an optical illusion that can be seen either as a vase or as two people facing each other.

It is exactly the same image, yet depending on how you are feeling that day — or in fact even from one instant to the next — it can be perceived as having entirely different “meanings.”

Even when two people are looking at exactly the same image at the same time, one may think, “This is a vase,” while the other perceives it as “two people facing each other.”

In other words, a single meaning is not inherent in the image itself; meaning is defined by the way the viewer perceives it.

The same kind of phenomenon occurs in language. For example, consider the following story.

I was short on money, so when I found a circus part-time job with an absurdly high hourly wage, I applied.

When I arrived at the venue on the day, I was forcibly shoved into a cage.

There was a lion in the cage.

The lion looked irritated, slowly approached me, and opened its mouth.

“Calm down, I’m just a part-timer too,” it said.

The way we perceive this text shifts dramatically before and after the punchline. Right before it, when the lion “opened its mouth,” we assume it is about to eat the narrator, but then the punchline reveals that it opened its mouth in order to speak. Once you read it again, new interpretations arise that were not there on first reading: perhaps the lion looked irritated not because it was hungry, but because it too had been forced into the cage. In the original story, it is revealed a bit more carefully that this lion is actually just a middle-aged man in a costume, but in this modified version, the text can be read in either of two ways: as a realistic setup in which the lion was a man in a costume, or as a fantastical setup in which it really was a talking lion. In that respect, its structure resembles the optical illusion above.

The famous six-word story, “For sale: baby shoes, never worn,” are also good examples of texts whose interpretation changes as you read them.

In this way, a text does not have only one meaning inherent in itself. The exact same text, the exact same string of characters, can take on different meanings depending on the reader’s cognition — sometimes even opposite meanings.

Cognitive semantics takes the position that, as in these examples, the meaning of words should be understood through human cognition and perception.

One of the central ideas within cognitive semantics is prototype semantics. Prototype semantics holds that within any category, some members are more typical examples — that is, prototypes — while others are less typical.

For example, consider the category of birds. A sparrow, a robin, an ostrich, and a penguin are all unquestionably birds. However, people tend to feel that sparrows and robins are typical birds, whereas ostriches and penguins are atypical.

This also affects how language is used.

Suppose you say, “Dear God, please make me a bird in my next life —” and are reborn as an ostrich. How would you feel? Sure, an ostrich is a bird, but it cannot fly, it is kind of squat and ungainly, and you would probably feel different from what you had in mind.

What “bird” means here is presumably something like a robin, or perhaps a swan gliding gracefully through the sky. If the speaker and listener belong to a group that shares similar linguistic intuitions, that intended meaning should be conveyed, and you presumably chose those words believing that it would be. In that case, the robin or the swan functions as the prototype of the concept. God, however, does not understand this linguistic intuition, and thinks, “As long as it is a bird, anything should be fine… In that case, since I have the chance, I might as well make it the biggest bird possible,” and with that unhelpful extra kindness turns you into an ostrich.

A study by Yann LeCun and colleagues, From Tokens to Thoughts: How LLMs and Humans Trade Compression for Meaning (ICLR 2026), uses this framework of prototype semantics to reveal differences between how LLMs and humans “feel” concepts.

The object of this study is the embedding representations of LLMs. Roughly speaking, you could say that it investigates how an LLM thinks internally. In other words, rather than interviewing the LLM and asking it to report how it “feels,” the study focuses on what is happening inside the model. It would be a leap to say that an LLM’s embeddings are identical to its “way of feeling,” but with cognitive semantics in mind, that image is a helpful one for understanding the study.

First, the study found that LLM embeddings perform category classification in a way similar to humans. When the embeddings of words such as sparrow, chicken, ostrich, and penguin, or chair and dress, were examined, birds clearly clustered with birds, furniture with furniture, and clothing with clothing. From this perspective, the way LLMs carve up the world is broadly consistent with humans.

However, LLM embeddings differed greatly from humans in what they regard as typical. Taking birds as an example, human participants were first asked to rate how typical each bird was. This yielded a ranking of what humans consider typical birds, something like the following:

1. Robin

2. Sparrow

3. Parakeet

...

9. Pelican

10. Ostrich

11. PenguinNext, using the embeddings of the LLM, they computed the cosine similarity between the embedding of “bird” and the embedding of each bird, for example, \( \text{cos}(E_{\text{bird}}, E_{\text{penguin}}) \), and ranked the birds in descending order of similarity — that is, in the order the LLM regarded them as most similar to “bird” itself.

The rank correlation coefficient between the human ranking and the LLM ranking was only 0.15 or less. In other words, humans and LLMs differ substantially in what they regard as a typical bird.

The cause of this result has not been fully clarified, but one possible factor is that atypical birds are more likely to be explicitly stated to be birds, and therefore more likely to co-occur with the category label. For example, people do say things like, “Penguins are actually birds,” but they do not usually go out of their way to say, “Robins are actually birds.” Biases in textual data like this may have pulled the embeddings of atypical birds closer to “bird.”

The paper discusses a different hypothesis: that the cause lies in the training objective. LLMs are trained by next-token prediction. The goal of next-token prediction is not to organize embedding representations; it is only to predict the next token. So there is no guarantee that these two goals will happen to align. In fact, models such as word2vec, which are explicitly designed for representation learning, showed relatively high rank correlations with humans, around 0.3 to 0.4, and even among transformer-based models, representation-learning models such as BERT tended to show relatively high rank correlations. Among models trained with next-token prediction, simply increasing model size or capability did not necessarily make their representations more consistent with humans; in some cases, stronger models even diverged further from humans. Indeed, if a model’s decoding ability becomes extremely high, it may be able to predict the next token by some mysterious means even while using internally tangled representations. For a highly capable model, using representations that match human intuitions might even become a handicap.

Because LLMs and humans differ in how they construe concepts, several problems can arise.

First, because the tendencies in how LLMs construe things differ from our own, they may fail to pick up the nuances that we leave implicit. For example, if you ask an LLM, “Please turn me into a bird —“, it might turn you into an ostrich. If you protest, the LLM might respond, “An ostrich is a type of bird, so this is not incorrect.” You would hardly want to work with a partner whose intuitions are that badly out of sync.

Second, the LLM’s mysterious internal representations may be one cause of undesirable behavior or weaknesses. For example, LLMs are good at predicting in the forward direction, but poor at predicting in the reverse direction [Zhu+ ICLR 2025]. If we take a passage from Jane Austen’s Pride and Prejudice and ask GPT-4, “What is the next sentence?”, it answers correctly with 65.9% accuracy; but if we ask, “What was the previous sentence?”, it answers correctly only 0.8% of the time. In text, where the order of the inputs during pretraining is always fixed, there appears a striking asymmetry in accuracy. This phenomenon is also observed in humans. For example, can you say what letter comes after “L”? Many people can probably continue with “M”, “N”, “O”, and “P”. On the other hand, if you try to go backward and say the letters before “L” in order, many people probably cannot do it without starting again from “A”, “B”, “C”, and so on. One reason is that, with the alphabet too, humans encounter it much more often in the forward direction than in reverse. One might think that if humans show the same thing, then perhaps it is not a problem. But humans show this phenomenon only in limited cases like this, whereas LLMs are trained basically only by “next-token prediction,” so this kind of phenomenon arises in all sorts of situations. The LLM’s mysterious representations are optimized to the extreme for “next-token prediction.” That may indeed improve the accuracy of next-token prediction, but if this optimization is taken too far, the model may lose the ability to perform other basic tasks, such as answering in the reverse direction. Once such representations are learned during pretraining, it may be difficult to correct them later through finetuning. This concern also exists within the LLM community itself. For example, there have been proposals to incorporate representation-learning methods such as contrastive learning in order to solve this asymmetry problem [Wang+ ICLR 2026].

Conclusion

LLMs are “language” models, and so they have been thought to handle only text; for that reason, it has long seemed difficult to train them on, or make them understand, factors outside of text, such as human cognition and ways of perceiving things.

However, cognitive semantics can serve as a bridge between cognition and language.

The exploration that combines LLMs and cognitive semantics has only just begun, but by making use of the approaches of cognitive semantics, it may become possible to understand the way LLMs “feel” language, or to teach LLMs a more human-like way of “feeling” it.

Have you ever felt that current LLMs fail to pick up meanings that are left implicit? I hope this article gives you an opportunity to think about the way LLMs recognize language.

Acknowledgment: I was introduced to cognitive semantics by Professor Sho Yokoi of the National Institute for Japanese Language and Linguistics at last year’s Annual Conference of the Japanese Society for Artificial Intelligence. I would like to express my gratitude here.

Author Profile

If you found this article useful or interesting, I would be delighted if you could share your thoughts on social media.

New posts are announced on @joisino_en (Twitter), so please be sure to follow!

Ryoma Sato

Currently an Assistant Professor at the National Institute of Informatics, Japan.

Research Interest: Machine Learning and Data Mining.

Ph.D (Kyoto University).